mirror of

https://github.com/crawlab-team/crawlab.git

synced 2026-01-21 17:21:09 +01:00

123

README-zh.md

123

README-zh.md

@@ -6,50 +6,101 @@

|

||||

<img src="https://img.shields.io/badge/License-BSD-blue.svg">

|

||||

</a>

|

||||

|

||||

中文 | [English](https://github.com/tikazyq/crawlab/blob/master/README.md)

|

||||

中文 | [English](https://github.com/tikazyq/crawlab)

|

||||

|

||||

基于Golang的分布式爬虫管理平台,支持Python、NodeJS、Go、Java、PHP等多种编程语言以及多种爬虫框架。

|

||||

|

||||

[查看演示 Demo](http://114.67.75.98:8080) | [文档](https://tikazyq.github.io/crawlab-docs)

|

||||

|

||||

## 要求

|

||||

- Go 1.12+

|

||||

- Node.js 8.12+

|

||||

- MongoDB 3.6+

|

||||

- Redis

|

||||

|

||||

## 安装

|

||||

|

||||

三种方式:

|

||||

1. [Docker](https://tikazyq.github.io/crawlab/Installation/Docker.md)(推荐)

|

||||

2. [直接部署](https://tikazyq.github.io/crawlab/Installation/Direct.md)

|

||||

3. [预览模式](https://tikazyq.github.io/crawlab/Installation/Direct.md)(快速体验)

|

||||

2. [直接部署](https://tikazyq.github.io/crawlab/Installation/Direct.md)(了解内核)

|

||||

|

||||

### 要求(Docker)

|

||||

- Docker 18.03+

|

||||

- Redis

|

||||

- MongoDB 3.6+

|

||||

|

||||

### 要求(直接部署)

|

||||

- Go 1.12+

|

||||

- Node 8.12+

|

||||

- Redis

|

||||

- MongoDB 3.6+

|

||||

|

||||

## 运行

|

||||

|

||||

### Docker

|

||||

|

||||

运行主节点示例。`192.168.99.1`是在Docker Machine网络中的宿主机IP地址。`192.168.99.100`是Docker主节点的IP地址。

|

||||

|

||||

```bash

|

||||

docker run -d --rm --name crawlab \

|

||||

-e CRAWLAB_REDIS_ADDRESS=192.168.99.1:6379 \

|

||||

-e CRAWLAB_MONGO_HOST=192.168.99.1 \

|

||||

-e CRAWLAB_SERVER_MASTER=Y \

|

||||

-e CRAWLAB_API_ADDRESS=192.168.99.100:8000 \

|

||||

-e CRAWLAB_SPIDER_PATH=/app/spiders \

|

||||

-p 8080:8080 \

|

||||

-p 8000:8000 \

|

||||

-v /var/logs/crawlab:/var/logs/crawlab \

|

||||

tikazyq/crawlab:0.3.0

|

||||

```

|

||||

|

||||

当然也可以用`docker-compose`来一键启动,甚至不用配置MongoDB和Redis数据库。

|

||||

|

||||

```bash

|

||||

docker-compose up

|

||||

```

|

||||

|

||||

Docker部署的详情,请见[相关文档](https://tikazyq.github.io/crawlab/Installation/Docker.md)。

|

||||

|

||||

### 直接部署

|

||||

|

||||

请参考[相关文档](https://tikazyq.github.io/crawlab/Installation/Direct.md)。

|

||||

|

||||

## 截图

|

||||

|

||||

#### 登录

|

||||

|

||||

|

||||

|

||||

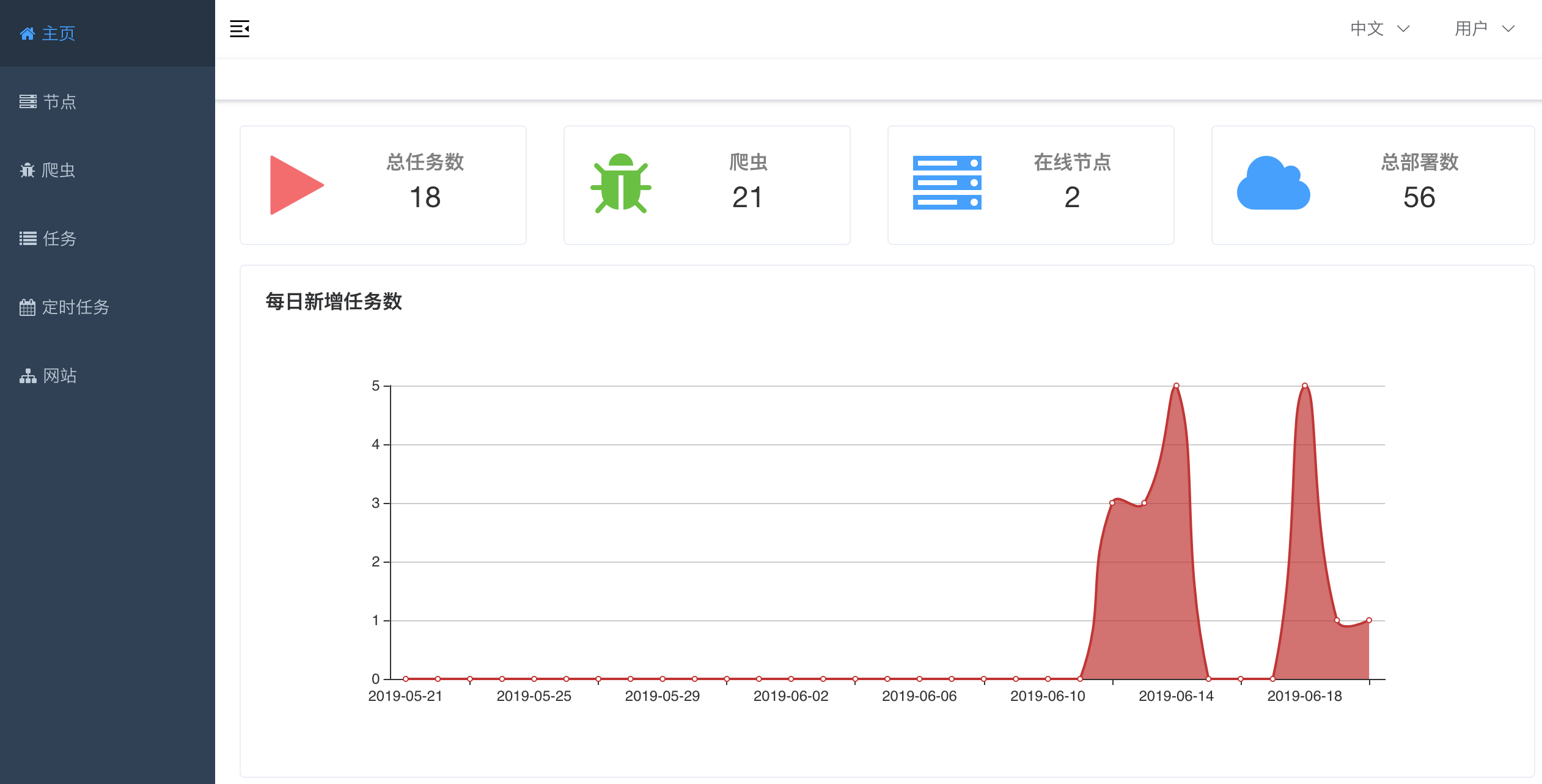

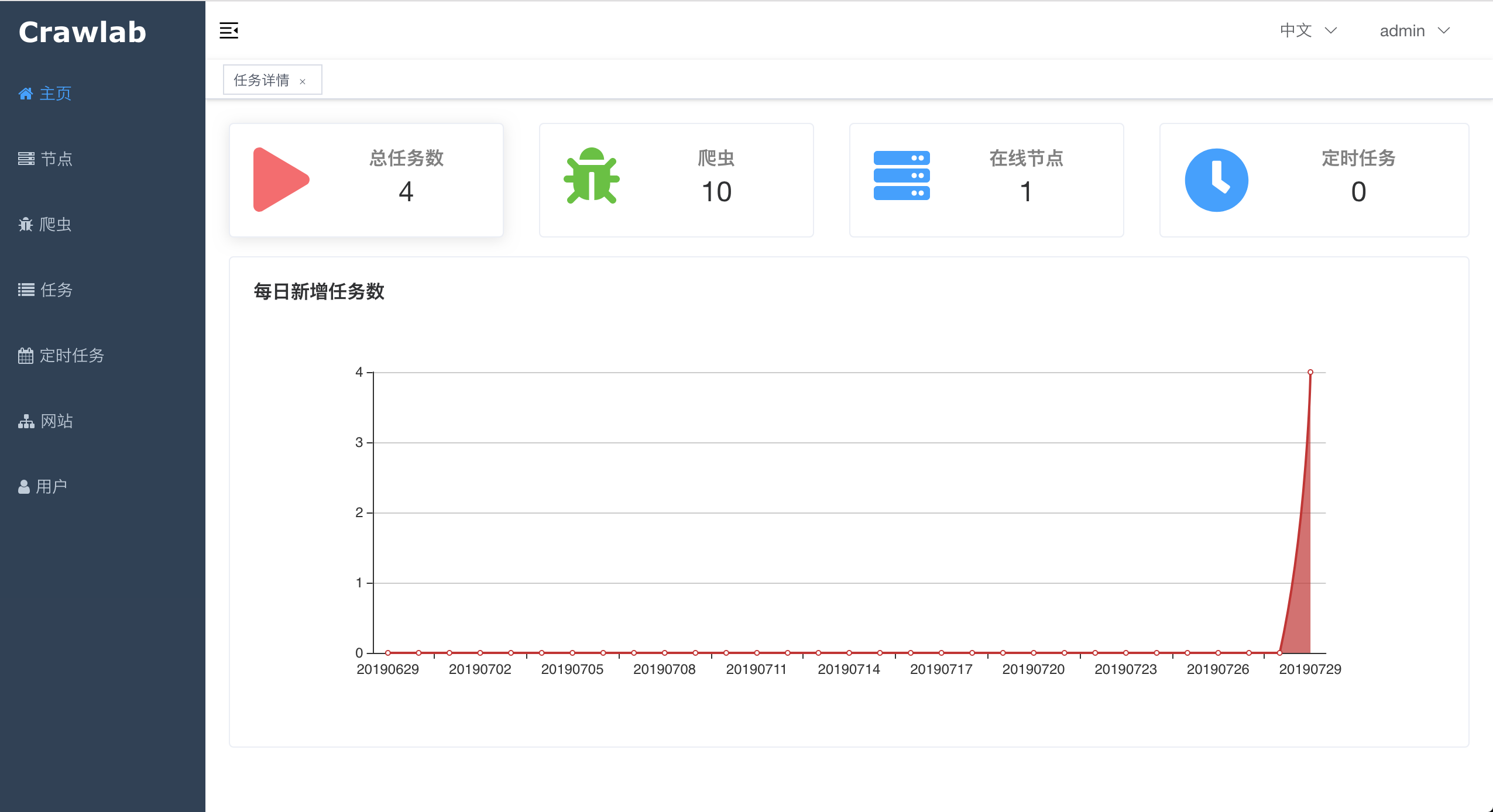

#### 首页

|

||||

|

||||

|

||||

|

||||

|

||||

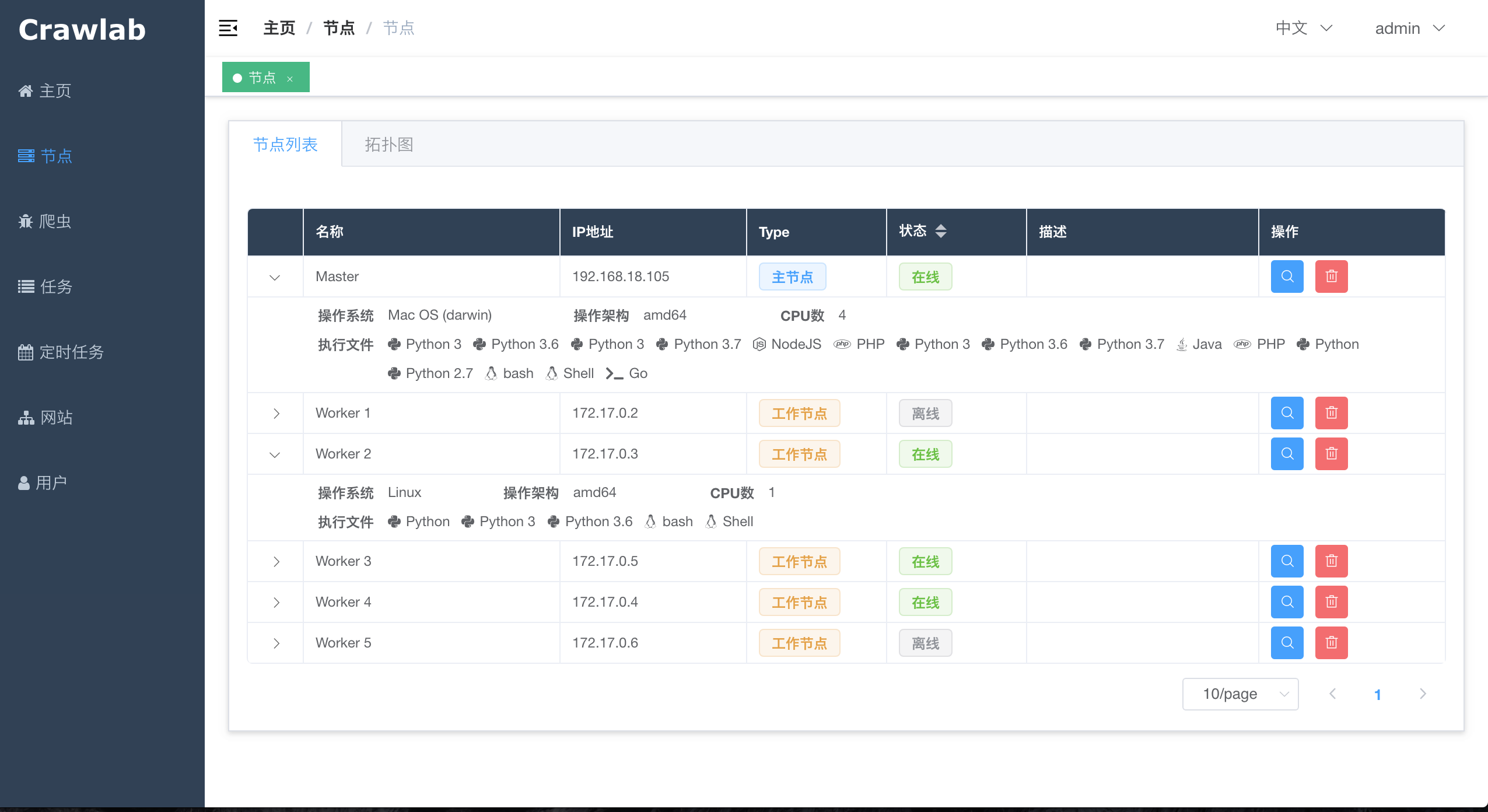

#### 节点列表

|

||||

|

||||

|

||||

|

||||

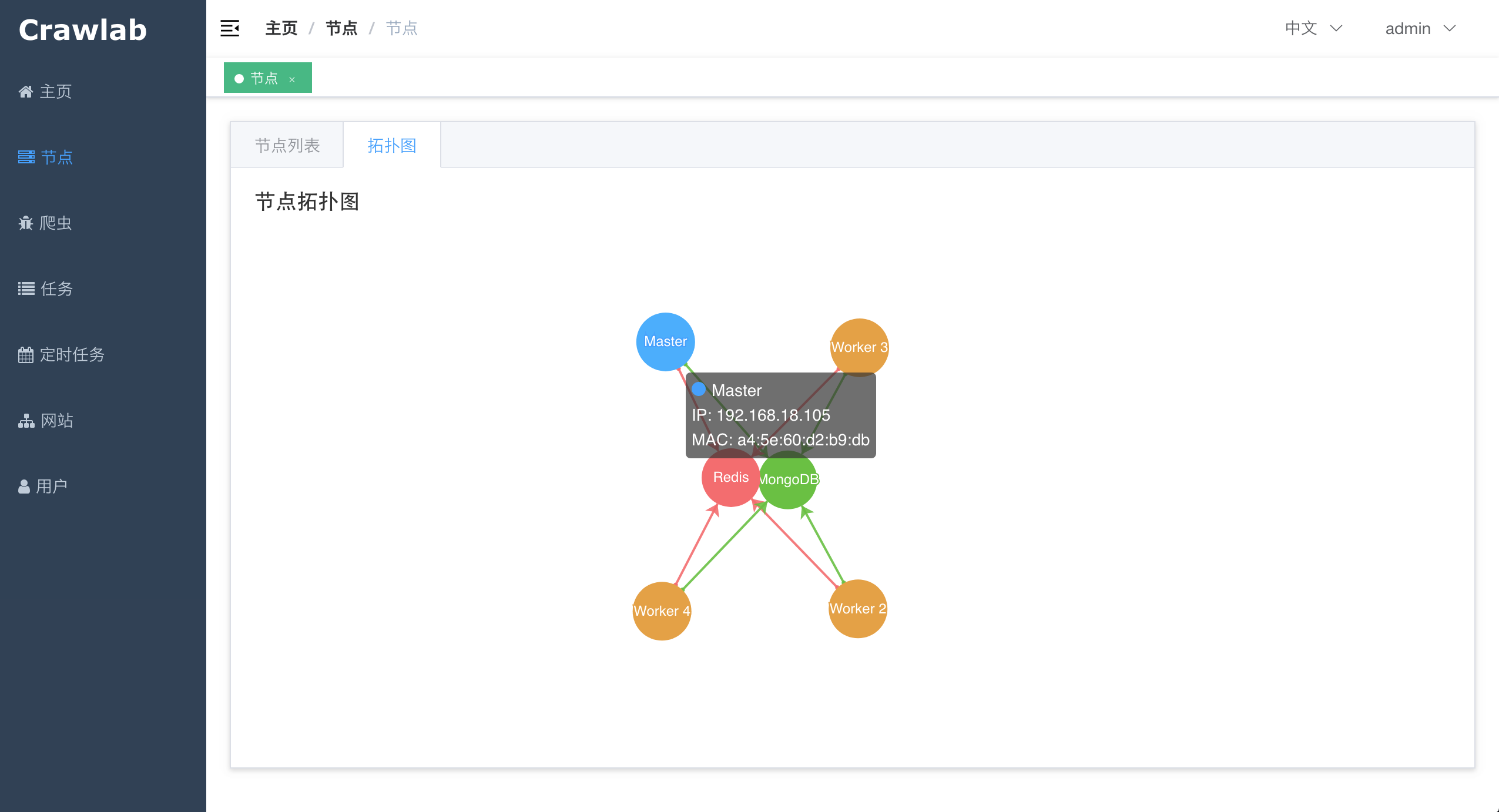

#### 节点拓扑图

|

||||

|

||||

|

||||

|

||||

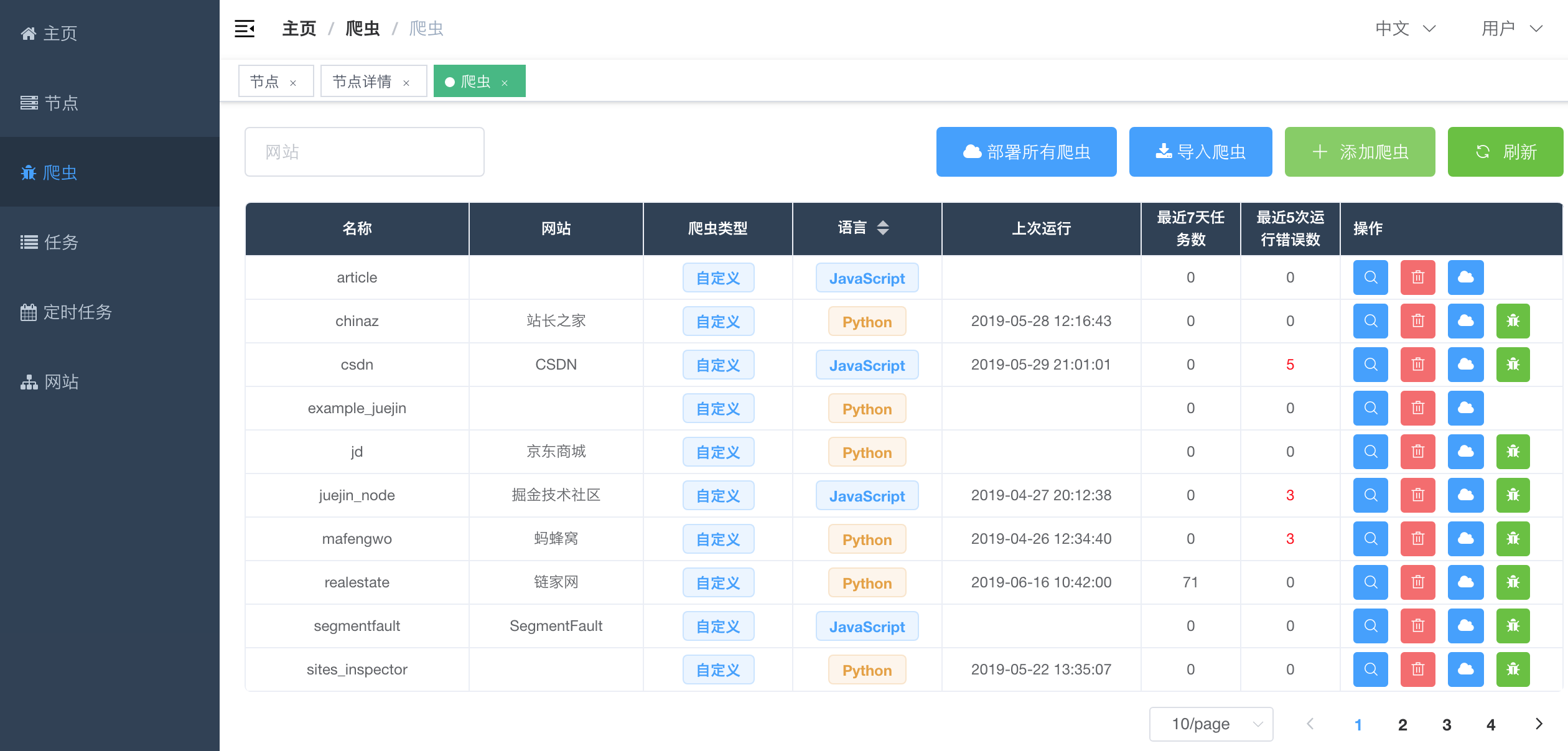

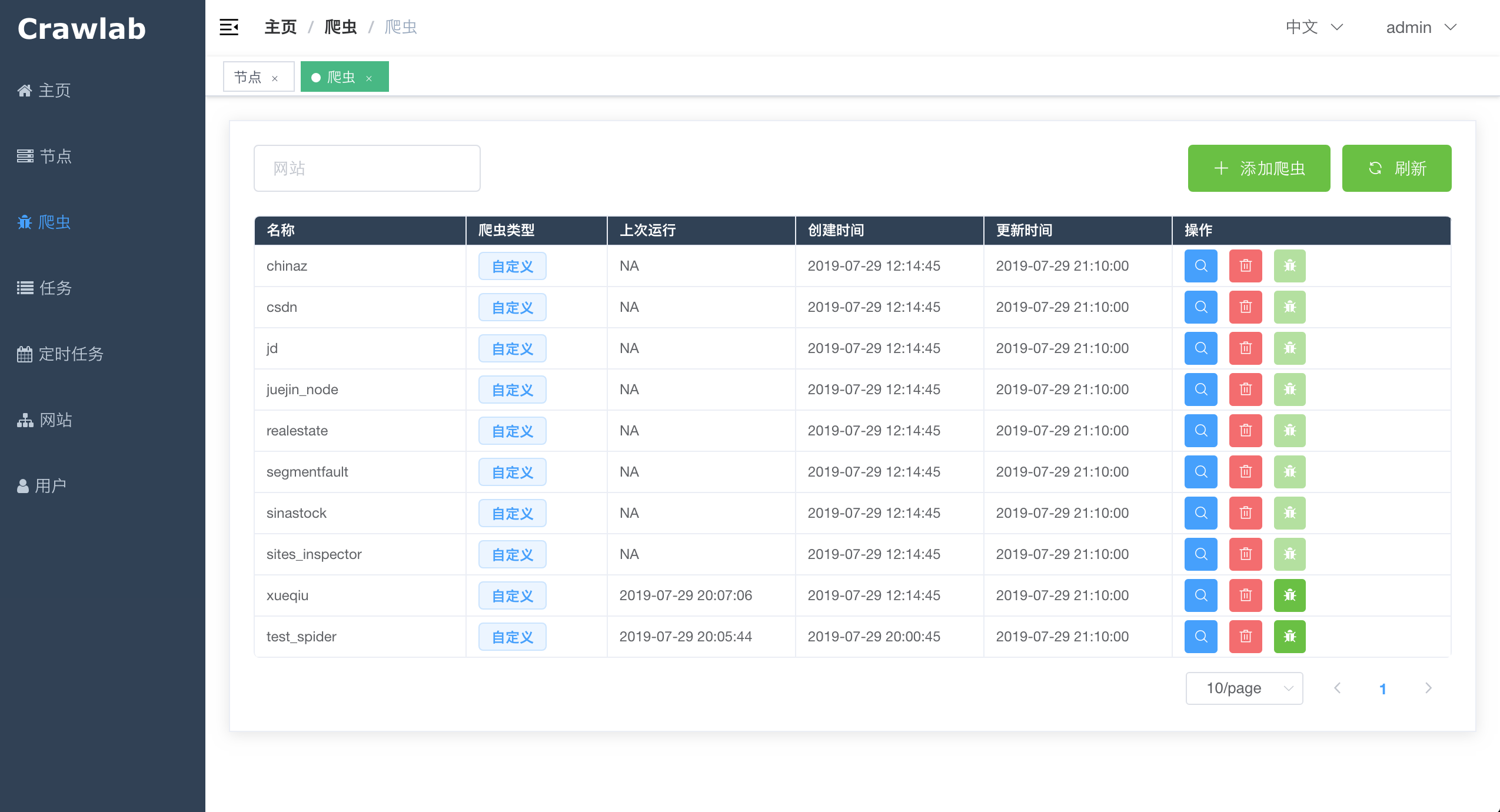

#### 爬虫列表

|

||||

|

||||

|

||||

|

||||

|

||||

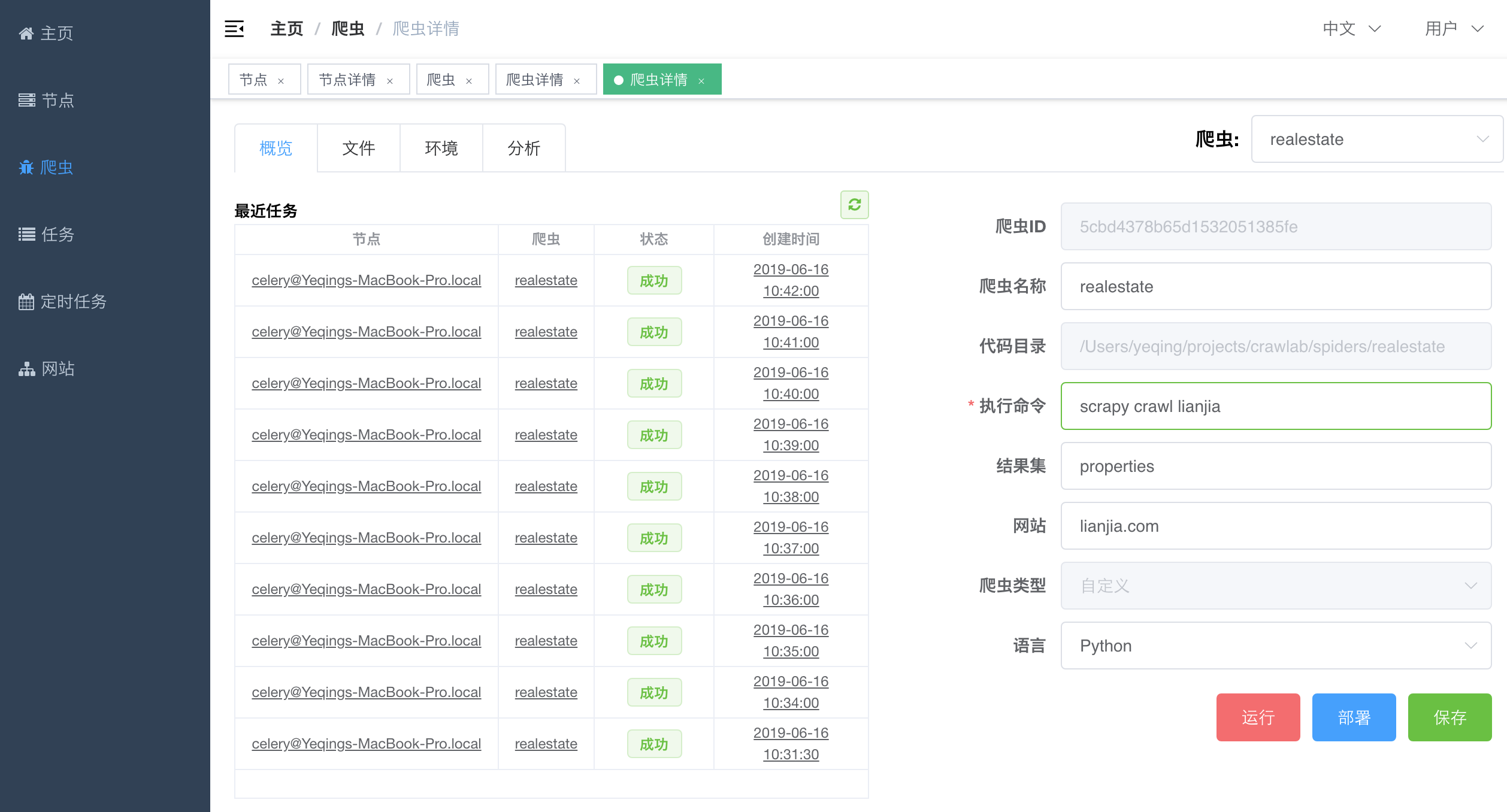

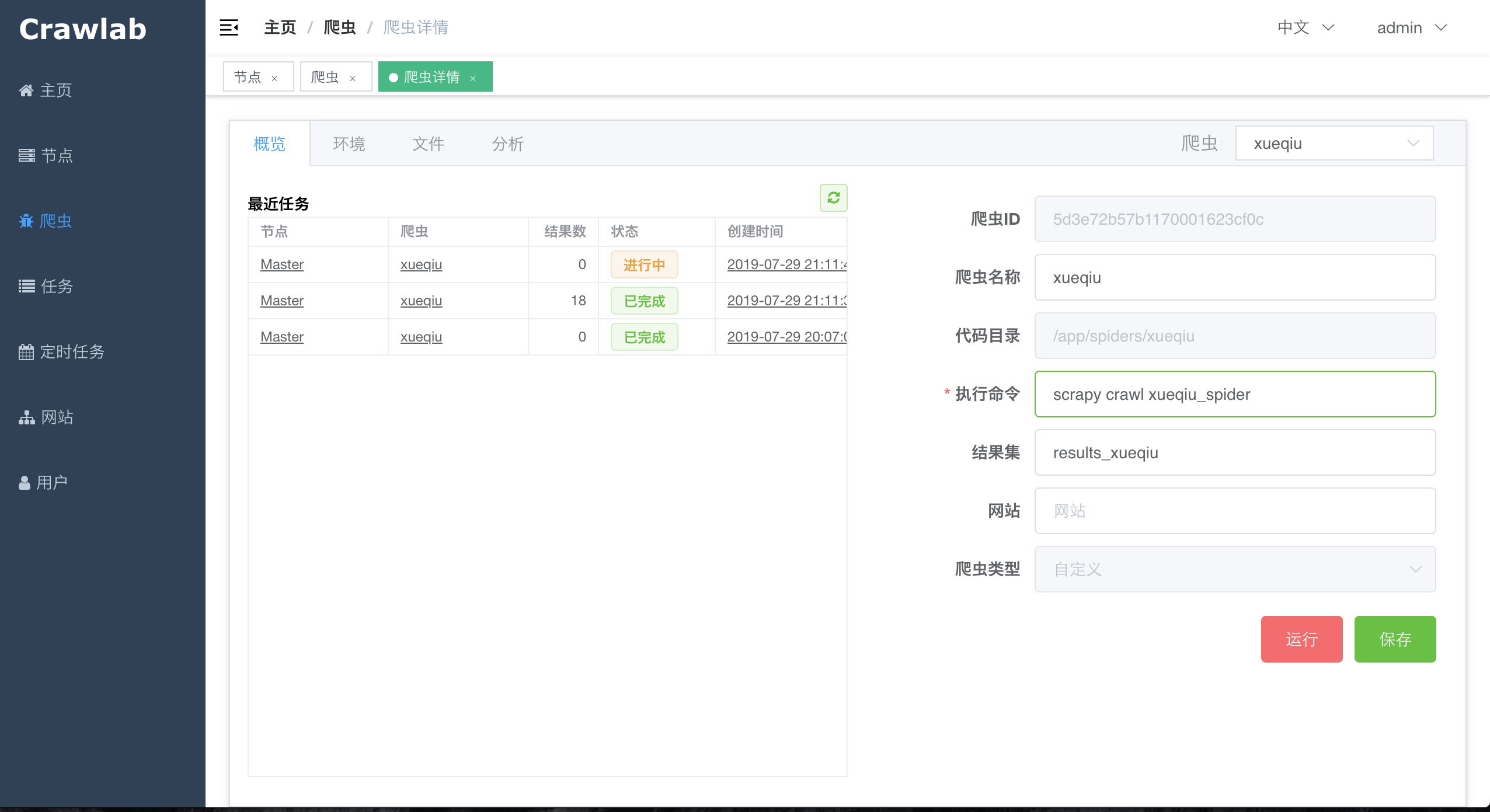

#### 爬虫详情 - 概览

|

||||

#### 爬虫概览

|

||||

|

||||

|

||||

|

||||

|

||||

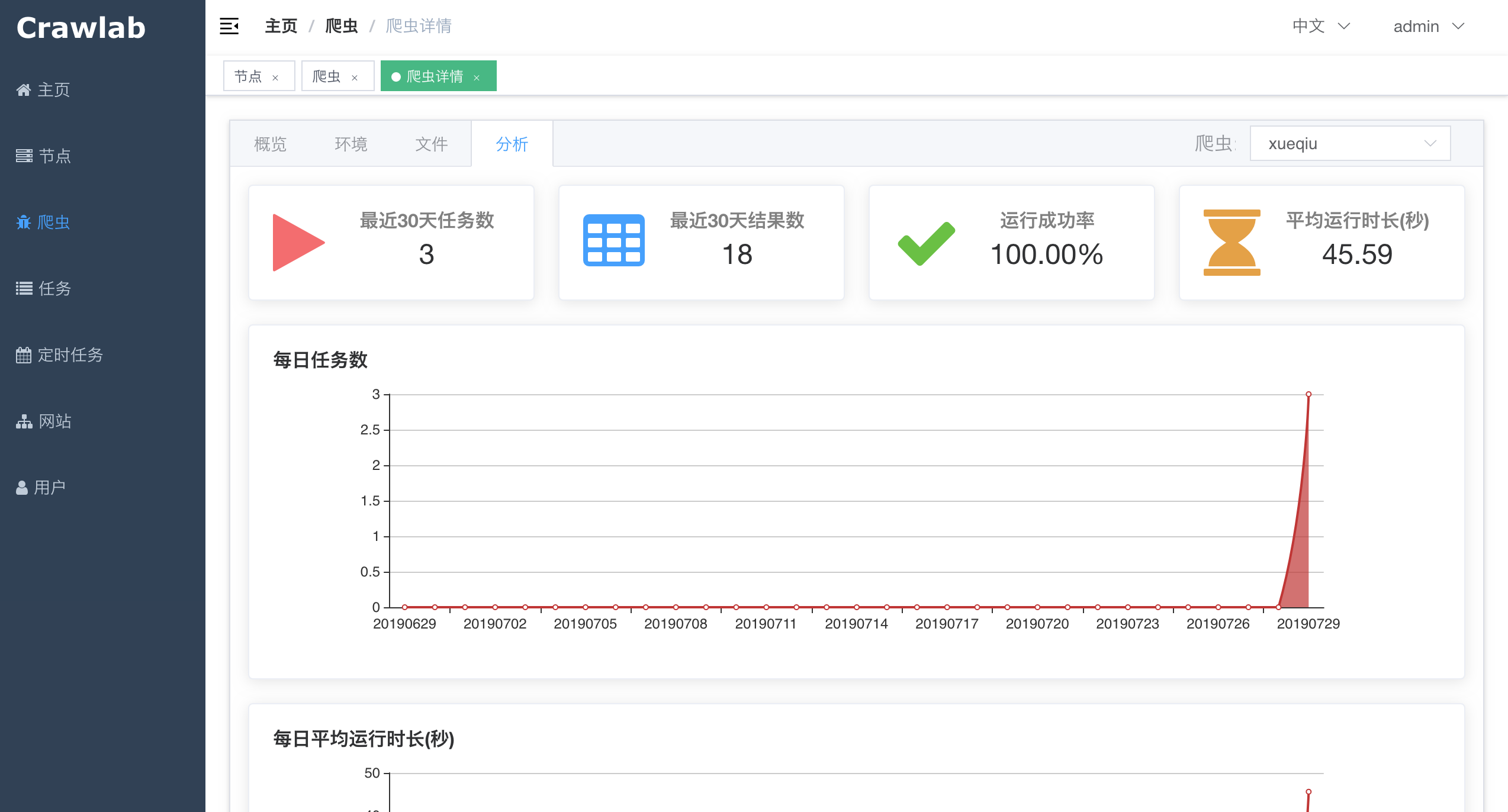

#### 爬虫详情 - 分析

|

||||

#### 爬虫分析

|

||||

|

||||

|

||||

|

||||

|

||||

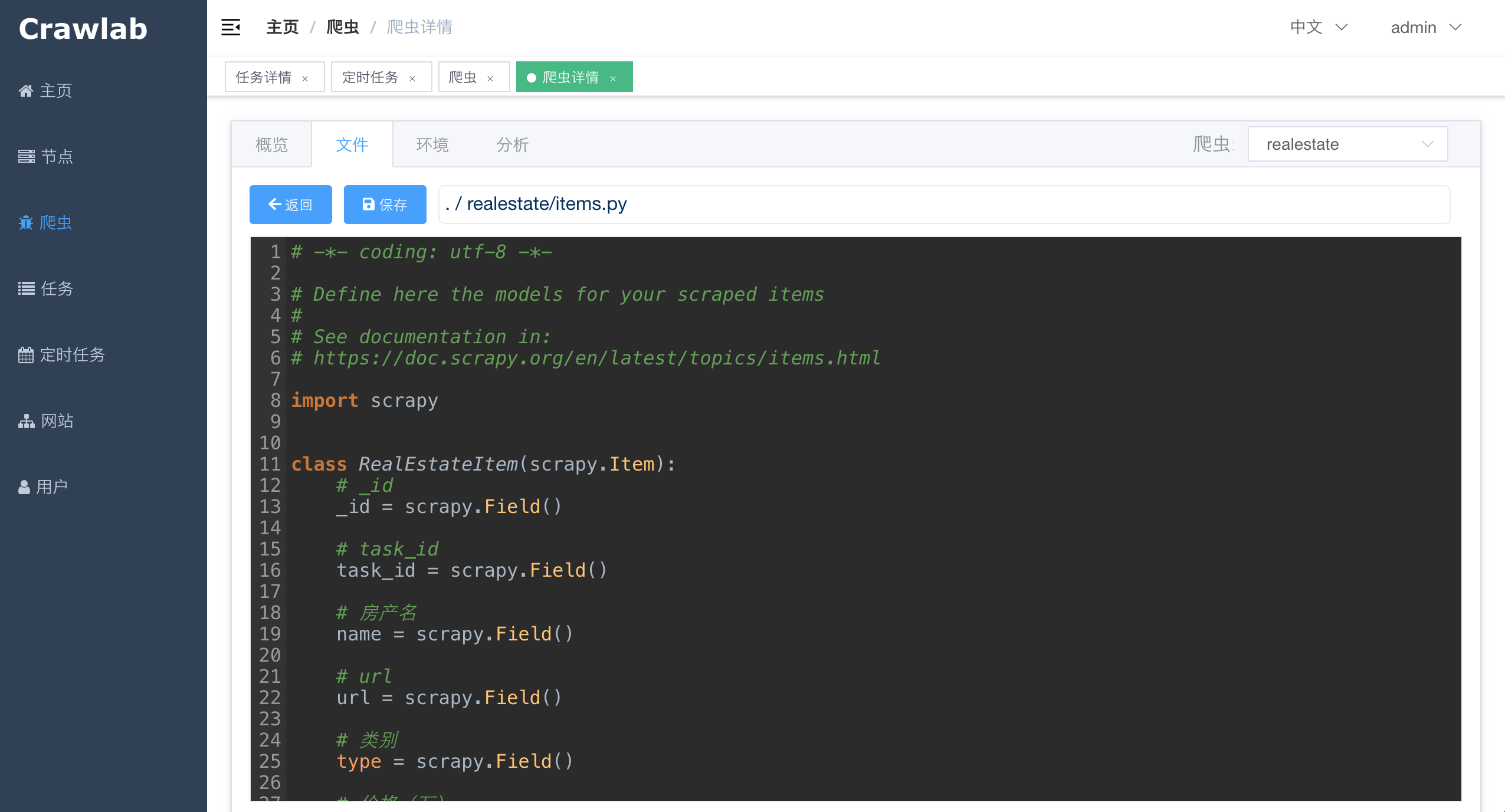

#### 爬虫文件

|

||||

|

||||

|

||||

|

||||

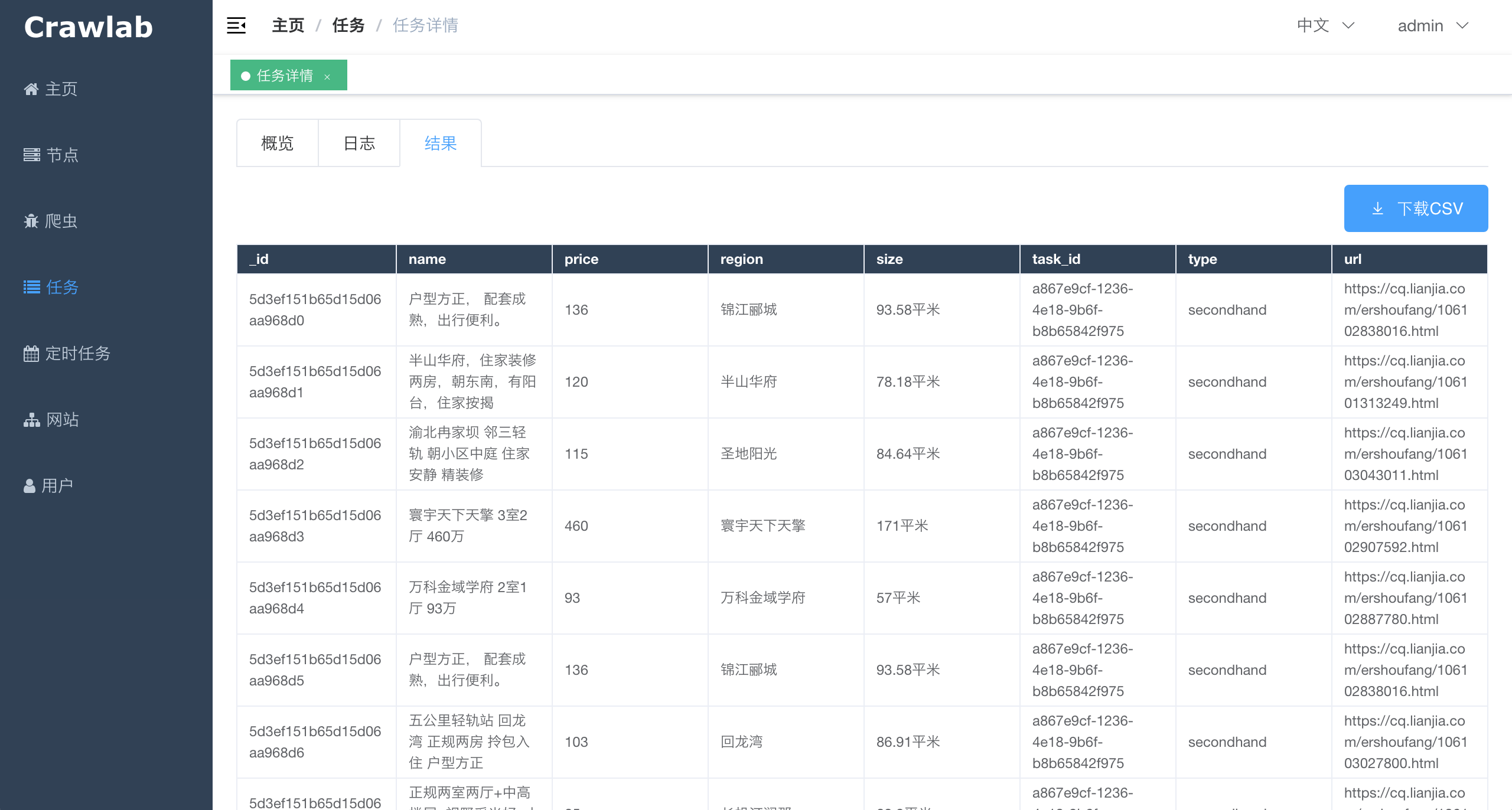

#### 任务详情 - 抓取结果

|

||||

|

||||

|

||||

|

||||

|

||||

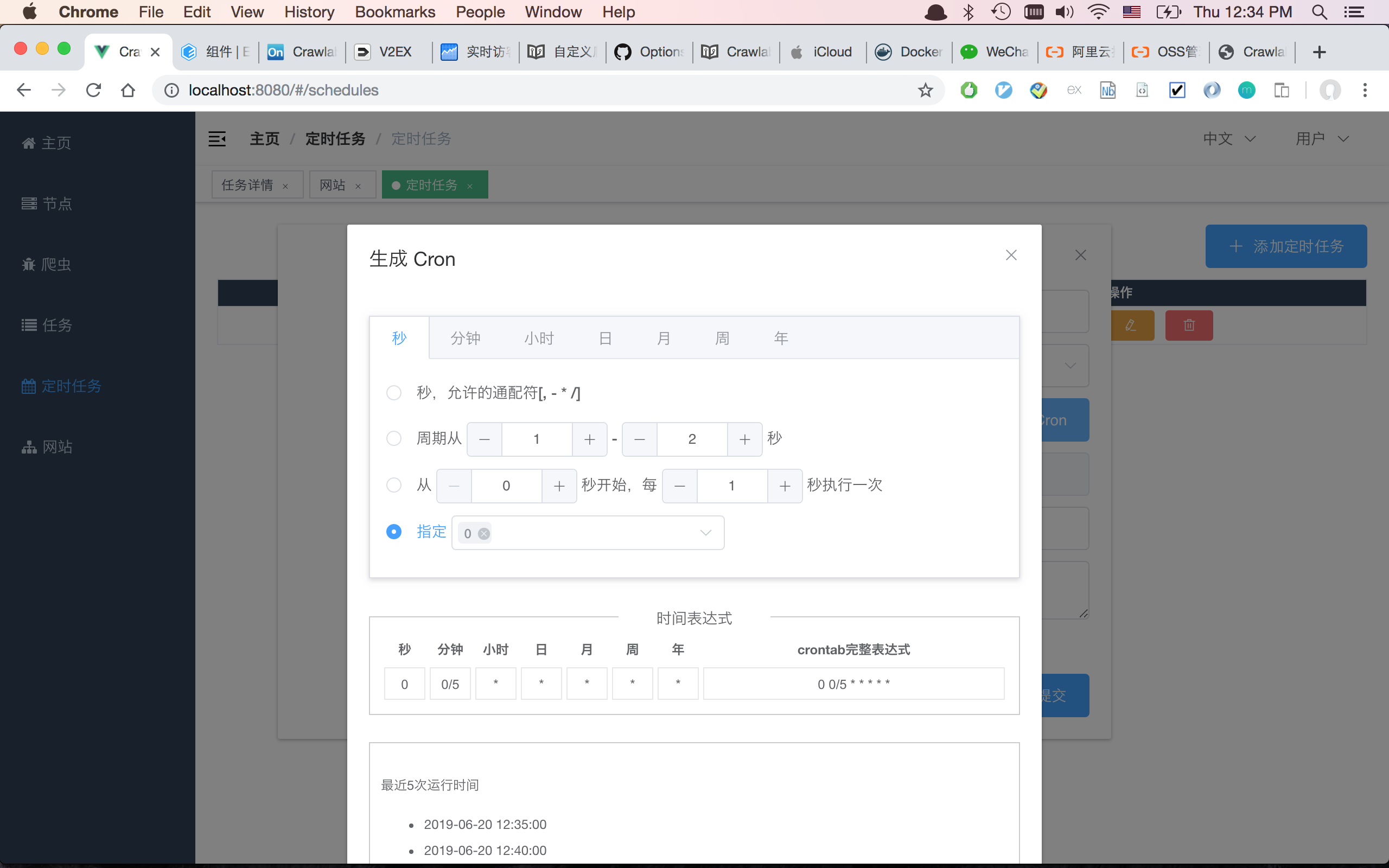

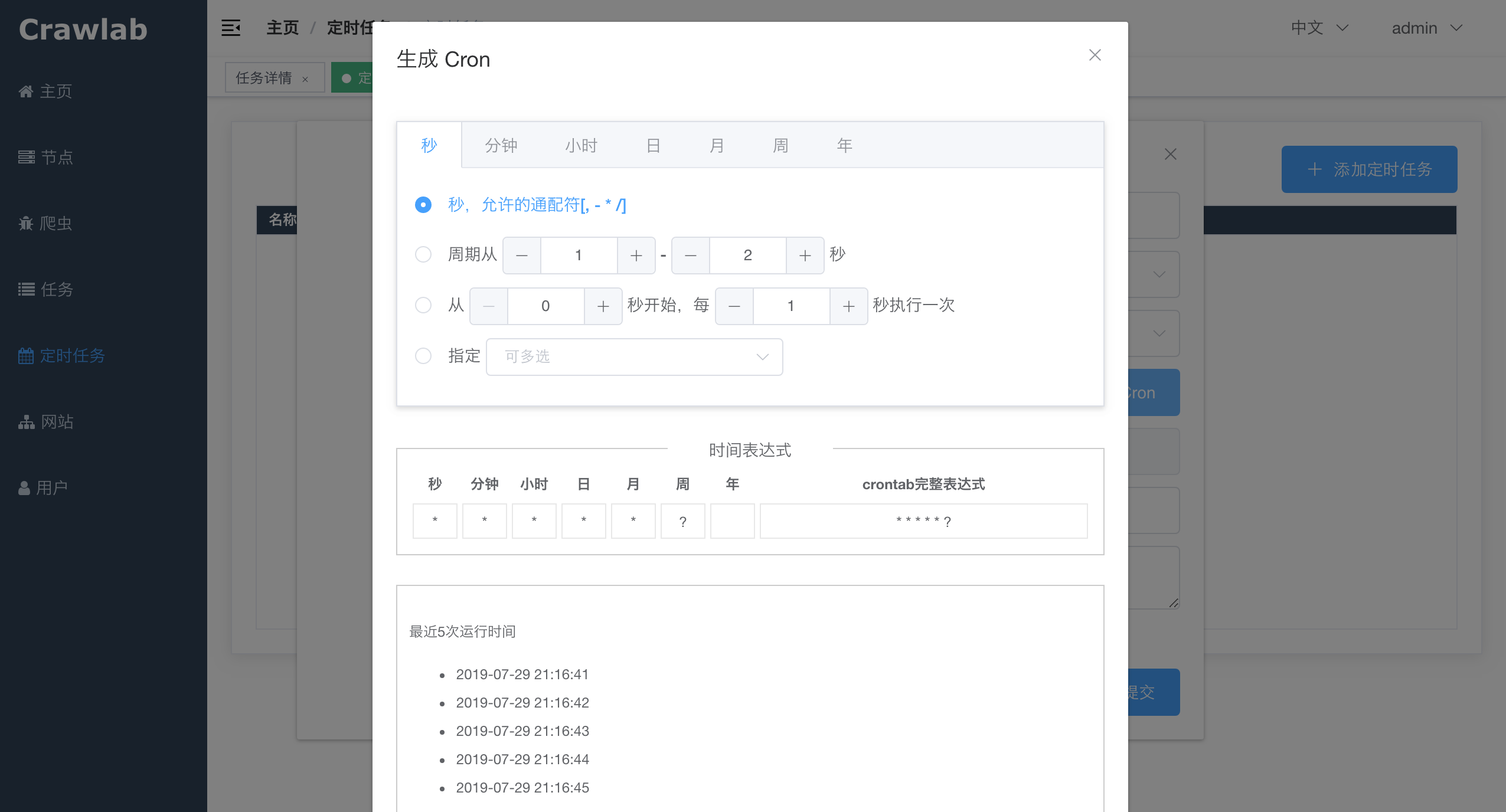

#### 定时任务

|

||||

|

||||

|

||||

|

||||

|

||||

## 架构

|

||||

|

||||

@@ -59,7 +110,7 @@ Crawlab的架构包括了一个主节点(Master Node)和多个工作节点

|

||||

|

||||

前端应用向主节点请求数据,主节点通过MongoDB和Redis来执行任务派发调度以及部署,工作节点收到任务之后,开始执行爬虫任务,并将任务结果储存到MongoDB。架构相对于`v0.3.0`之前的Celery版本有所精简,去除了不必要的节点监控模块Flower,节点监控主要由Redis完成。

|

||||

|

||||

### 主节点 Master Node

|

||||

### 主节点

|

||||

|

||||

主节点是整个Crawlab架构的核心,属于Crawlab的中控系统。

|

||||

|

||||

@@ -68,36 +119,33 @@ Crawlab的架构包括了一个主节点(Master Node)和多个工作节点

|

||||

2. 工作节点管理和通信

|

||||

3. 爬虫部署

|

||||

4. 前端以及API服务

|

||||

5. 执行任务(可以将主节点当成工作节点)

|

||||

|

||||

主节点负责与前端应用进行通信,并通过Redis将爬虫任务派发给工作节点。同时,主节点会同步(部署)爬虫给工作节点,通过Redis和MongoDB的GridFS。

|

||||

|

||||

### 工作节点

|

||||

|

||||

工作节点的主要功能是执行爬虫任务和储存抓取数据与日志,并且通过Redis的PubSub跟主节点通信。

|

||||

工作节点的主要功能是执行爬虫任务和储存抓取数据与日志,并且通过Redis的`PubSub`跟主节点通信。通过增加工作节点数量,Crawlab可以做到横向扩展,不同的爬虫任务可以分配到不同的节点上执行。

|

||||

|

||||

### 爬虫 Spider

|

||||

### MongoDB

|

||||

|

||||

爬虫源代码或配置规则储存在`App`上,需要被部署到各个`worker`节点中。

|

||||

MongoDB是Crawlab的运行数据库,储存有节点、爬虫、任务、定时任务等数据,另外GridFS文件储存方式是主节点储存爬虫文件并同步到工作节点的中间媒介。

|

||||

|

||||

### 任务 Task

|

||||

### Redis

|

||||

|

||||

任务被触发并被节点执行。用户可以在任务详情页面中看到任务到状态、日志和抓取结果。

|

||||

Redis是非常受欢迎的Key-Value数据库,在Crawlab中主要实现节点间数据通信的功能。例如,节点会将自己信息通过`HSET`储存在Redis的`nodes`哈希列表中,主节点根据哈希列表来判断在线节点。

|

||||

|

||||

### 前端 Frontend

|

||||

### 前端

|

||||

|

||||

前端是一个基于[Vue-Element-Admin](https://github.com/PanJiaChen/vue-element-admin)的单页应用。其中重用了很多Element-UI的控件来支持相应的展示。

|

||||

|

||||

### Flower

|

||||

|

||||

一个Celery的插件,用于监控Celery节点。

|

||||

|

||||

## 与其他框架的集成

|

||||

|

||||

任务是利用python的`subprocess`模块中的`Popen`来实现的。任务ID将以环境变量`CRAWLAB_TASK_ID`的形式存在于爬虫任务运行的进程中,并以此来关联抓取数据。

|

||||

爬虫任务本质上是由一个shell命令来实现的。任务ID将以环境变量`CRAWLAB_TASK_ID`的形式存在于爬虫任务运行的进程中,并以此来关联抓取数据。另外,`CRAWLAB_COLLECTION`是Crawlab传过来的所存放collection的名称。

|

||||

|

||||

在你的爬虫程序中,你需要将`CRAWLAB_TASK_ID`的值以`task_id`作为可以存入数据库中。这样Crawlab就知道如何将爬虫任务与抓取数据关联起来了。当前,Crawlab只支持MongoDB。

|

||||

在爬虫程序中,需要将`CRAWLAB_TASK_ID`的值以`task_id`作为可以存入数据库中`CRAWLAB_COLLECTION`的collection中。这样Crawlab就知道如何将爬虫任务与抓取数据关联起来了。当前,Crawlab只支持MongoDB。

|

||||

|

||||

### Scrapy

|

||||

### 集成Scrapy

|

||||

|

||||

以下是Crawlab跟Scrapy集成的例子,利用了Crawlab传过来的task_id和collection_name。

|

||||

|

||||

@@ -135,11 +183,20 @@ Crawlab使用起来很方便,也很通用,可以适用于几乎任何主流

|

||||

|框架 | 类型 | 分布式 | 前端 | 依赖于Scrapyd |

|

||||

|:---:|:---:|:---:|:---:|:---:|

|

||||

| [Crawlab](https://github.com/tikazyq/crawlab) | 管理平台 | Y | Y | N

|

||||

| [Gerapy](https://github.com/Gerapy/Gerapy) | 管理平台 | Y | Y | Y

|

||||

| [SpiderKeeper](https://github.com/DormyMo/SpiderKeeper) | 管理平台 | Y | Y | Y

|

||||

| [ScrapydWeb](https://github.com/my8100/scrapydweb) | 管理平台 | Y | Y | Y

|

||||

| [SpiderKeeper](https://github.com/DormyMo/SpiderKeeper) | 管理平台 | Y | Y | Y

|

||||

| [Gerapy](https://github.com/Gerapy/Gerapy) | 管理平台 | Y | Y | Y

|

||||

| [Scrapyd](https://github.com/scrapy/scrapyd) | 网络服务 | Y | N | N/A

|

||||

|

||||

## 相关文章

|

||||

|

||||

- [爬虫管理平台Crawlab部署指南(Docker and more)](https://juejin.im/post/5d01027a518825142939320f)

|

||||

- [[爬虫手记] 我是如何在3分钟内开发完一个爬虫的](https://juejin.im/post/5ceb4342f265da1bc8540660)

|

||||

- [手把手教你如何用Crawlab构建技术文章聚合平台(二)](https://juejin.im/post/5c92365d6fb9a070c5510e71)

|

||||

- [手把手教你如何用Crawlab构建技术文章聚合平台(一)](https://juejin.im/user/5a1ba6def265da430b7af463/posts)

|

||||

|

||||

**注意: v0.3.0版本已将基于Celery的Python版本切换为了Golang版本,如何部署请参照文档**

|

||||

|

||||

## 社区 & 赞助

|

||||

|

||||

如果您觉得Crawlab对您的日常开发或公司有帮助,请加作者微信 tikazyq1 并注明"Crawlab",作者会将你拉入群。或者,您可以扫下方支付宝二维码给作者打赏去升级团队协作软件或买一杯咖啡。

|

||||

|

||||

@@ -33,9 +33,17 @@ func InitMongo() error {

|

||||

var mongoHost = viper.GetString("mongo.host")

|

||||

var mongoPort = viper.GetString("mongo.port")

|

||||

var mongoDb = viper.GetString("mongo.db")

|

||||

var mongoUsername = viper.GetString("mongo.username")

|

||||

var mongoPassword = viper.GetString("mongo.password")

|

||||

|

||||

if Session == nil {

|

||||

sess, err := mgo.Dial("mongodb://" + mongoHost + ":" + mongoPort + "/" + mongoDb)

|

||||

var uri string

|

||||

if mongoUsername == "" {

|

||||

uri = "mongodb://" + mongoHost + ":" + mongoPort + "/" + mongoDb

|

||||

} else {

|

||||

uri = "mongodb://" + mongoUsername + ":" + mongoPassword + "@" + mongoHost + ":" + mongoPort + "/" + mongoDb

|

||||

}

|

||||

sess, err := mgo.Dial(uri)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

@@ -91,11 +91,11 @@ func main() {

|

||||

app.POST("/nodes/:id", routes.PostNode) // 修改节点

|

||||

app.GET("/nodes/:id/tasks", routes.GetNodeTaskList) // 节点任务列表

|

||||

app.GET("/nodes/:id/system", routes.GetSystemInfo) // 节点任务列表

|

||||

app.DELETE("/nodes/:id", routes.DeleteNode) // 删除节点

|

||||

// 爬虫

|

||||

app.GET("/spiders", routes.GetSpiderList) // 爬虫列表

|

||||

app.GET("/spiders/:id", routes.GetSpider) // 爬虫详情

|

||||

app.PUT("/spiders", routes.PutSpider) // 上传爬虫

|

||||

app.POST("/spiders", routes.PublishAllSpiders) // 发布所有爬虫

|

||||

app.POST("/spiders", routes.PutSpider) // 上传爬虫

|

||||

app.POST("/spiders/:id", routes.PostSpider) // 修改爬虫

|

||||

app.POST("/spiders/:id/publish", routes.PublishSpider) // 发布爬虫

|

||||

app.DELETE("/spiders/:id", routes.DeleteSpider) // 删除爬虫

|

||||

|

||||

@@ -108,3 +108,21 @@ func GetSystemInfo(c *gin.Context) {

|

||||

Data: sysInfo,

|

||||

})

|

||||

}

|

||||

|

||||

func DeleteNode(c *gin.Context) {

|

||||

id := c.Param("id")

|

||||

node, err := model.GetNode(bson.ObjectIdHex(id))

|

||||

if err != nil {

|

||||

HandleError(http.StatusInternalServerError, c ,err)

|

||||

return

|

||||

}

|

||||

err = node.Delete()

|

||||

if err != nil {

|

||||

HandleError(http.StatusInternalServerError, c, err)

|

||||

return

|

||||

}

|

||||

c.JSON(http.StatusOK, Response{

|

||||

Status: "ok",

|

||||

Message: "success",

|

||||

})

|

||||

}

|

||||

|

||||

@@ -130,7 +130,7 @@ func ExecuteShellCmd(cmdStr string, cwd string, t model.Task, s model.Spider) (e

|

||||

|

||||

// 添加任务环境变量

|

||||

for _, env := range s.Envs {

|

||||

cmd.Env = append(cmd.Env, env.Name + "=" + env.Value)

|

||||

cmd.Env = append(cmd.Env, env.Name+"="+env.Value)

|

||||

}

|

||||

|

||||

// 起一个goroutine来监控进程

|

||||

@@ -344,14 +344,16 @@ func ExecuteTask(id int) {

|

||||

}

|

||||

|

||||

// 起一个cron执行器来统计任务结果数

|

||||

cronExec := cron.New(cron.WithSeconds())

|

||||

_, err = cronExec.AddFunc("*/5 * * * * *", SaveTaskResultCount(t.Id))

|

||||

if err != nil {

|

||||

log.Errorf(GetWorkerPrefix(id) + err.Error())

|

||||

return

|

||||

if spider.Col != "" {

|

||||

cronExec := cron.New(cron.WithSeconds())

|

||||

_, err = cronExec.AddFunc("*/5 * * * * *", SaveTaskResultCount(t.Id))

|

||||

if err != nil {

|

||||

log.Errorf(GetWorkerPrefix(id) + err.Error())

|

||||

return

|

||||

}

|

||||

cronExec.Start()

|

||||

defer cronExec.Stop()

|

||||

}

|

||||

cronExec.Start()

|

||||

defer cronExec.Stop()

|

||||

|

||||

// 执行Shell命令

|

||||

if err := ExecuteShellCmd(cmd, cwd, t, spider); err != nil {

|

||||

@@ -360,9 +362,11 @@ func ExecuteTask(id int) {

|

||||

}

|

||||

|

||||

// 更新任务结果数

|

||||

if err := model.UpdateTaskResultCount(t.Id); err != nil {

|

||||

log.Errorf(GetWorkerPrefix(id) + err.Error())

|

||||

return

|

||||

if spider.Col != "" {

|

||||

if err := model.UpdateTaskResultCount(t.Id); err != nil {

|

||||

log.Errorf(GetWorkerPrefix(id) + err.Error())

|

||||

return

|

||||

}

|

||||

}

|

||||

|

||||

// 完成进程

|

||||

|

||||

@@ -2,20 +2,28 @@ version: '3.3'

|

||||

services:

|

||||

master:

|

||||

image: tikazyq/crawlab:latest

|

||||

container_name: crawlab

|

||||

volumes:

|

||||

- /home/yeqing/config.py:/opt/crawlab/crawlab/config/config.py # 后端配置文件

|

||||

- /home/yeqing/.env.production:/opt/crawlab/frontend/.env.production # 前端配置文件

|

||||

container_name: crawlab-master

|

||||

environment:

|

||||

CRAWLAB_API_ADDRESS: "192.168.99.100:8000"

|

||||

CRAWLAB_SERVER_MASTER: "Y"

|

||||

CRAWLAB_MONGO_HOST: "mongo"

|

||||

CRAWLAB_REDIS_ADDRESS: "redis:6379"

|

||||

ports:

|

||||

- "8080:8080" # nginx

|

||||

- "8000:8000" # app

|

||||

- "8080:8080" # frontend

|

||||

- "8000:8000" # backend

|

||||

depends_on:

|

||||

- mongo

|

||||

- redis

|

||||

worker:

|

||||

image: tikazyq/crawlab:latest

|

||||

container_name: crawlab-worker

|

||||

environment:

|

||||

CRAWLAB_SERVER_MASTER: "N"

|

||||

CRAWLAB_MONGO_HOST: "mongo"

|

||||

CRAWLAB_REDIS_ADDRESS: "redis:6379"

|

||||

depends_on:

|

||||

- mongo

|

||||

- redis

|

||||

entrypoint:

|

||||

- /bin/sh

|

||||

- /opt/crawlab/docker_init.sh

|

||||

- master

|

||||

mongo:

|

||||

image: mongo:latest

|

||||

restart: always

|

||||

@@ -25,4 +33,4 @@ services:

|

||||

image: redis:latest

|

||||

restart: always

|

||||

ports:

|

||||

- "6379:6379"

|

||||

- "6379:6379"

|

||||

@@ -1,4 +1,4 @@

|

||||

docker run -d --rm --name crawlab \

|

||||

docker run -d --restart always --name crawlab \

|

||||

-e CRAWLAB_REDIS_ADDRESS=192.168.99.1:6379 \

|

||||

-e CRAWLAB_MONGO_HOST=192.168.99.1 \

|

||||

-e CRAWLAB_SERVER_MASTER=Y \

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

docker run --rm --name crawlab \

|

||||

docker run --restart always --name crawlab \

|

||||

-e CRAWLAB_REDIS_ADDRESS=192.168.99.1:6379 \

|

||||

-e CRAWLAB_MONGO_HOST=192.168.99.1 \

|

||||

-e CRAWLAB_SERVER_MASTER=N \

|

||||

|

||||

@@ -23,17 +23,28 @@ export default {

|

||||

|

||||

<style scoped>

|

||||

.log-item {

|

||||

display: flex;

|

||||

display: table;

|

||||

}

|

||||

|

||||

.log-item:first-child .line-no {

|

||||

padding-top: 10px;

|

||||

}

|

||||

|

||||

.log-item .line-no {

|

||||

margin-right: 10px;

|

||||

display: table-cell;

|

||||

color: #A9B7C6;

|

||||

background: #313335;

|

||||

padding-right: 10px;

|

||||

text-align: right;

|

||||

flex-basis: 40px;

|

||||

width: 70px;

|

||||

}

|

||||

|

||||

.log-item .line-content {

|

||||

display: inline-block;

|

||||

padding-left: 10px;

|

||||

display: table-cell;

|

||||

/*display: inline-block;*/

|

||||

word-break: break-word;

|

||||

flex-basis: calc(100% - 50px);

|

||||

}

|

||||

</style>

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

<template>

|

||||

<virtual-list

|

||||

:size="18"

|

||||

class="log-view"

|

||||

:size="6"

|

||||

:remain="100"

|

||||

:item="item"

|

||||

:itemcount="logData.length"

|

||||

@@ -60,19 +61,12 @@ export default {

|

||||

|

||||

<style scoped>

|

||||

.log-view {

|

||||

margin-top: 0!important;

|

||||

min-height: 100%;

|

||||

overflow-y: scroll;

|

||||

list-style: none;

|

||||

}

|

||||

|

||||

.log-view .log-line {

|

||||

display: flex;

|

||||

}

|

||||

|

||||

.log-view .log-line:nth-child(odd) {

|

||||

}

|

||||

|

||||

.log-view .log-line:nth-child(even) {

|

||||

color: #A9B7C6;

|

||||

background: #2B2B2B;

|

||||

}

|

||||

|

||||

</style>

|

||||

|

||||

@@ -175,6 +175,7 @@ export default {

|

||||

'Create Time': '创建时间',

|

||||

'Start Time': '开始时间',

|

||||

'Finish Time': '结束时间',

|

||||

'Update Time': '更新时间',

|

||||

|

||||

// 部署

|

||||

'Time': '时间',

|

||||

|

||||

@@ -160,6 +160,7 @@ export const constantRouterMap = [

|

||||

name: 'Site',

|

||||

path: '/sites',

|

||||

component: Layout,

|

||||

hidden: true,

|

||||

meta: {

|

||||

title: 'Site',

|

||||

icon: 'fa fa-sitemap'

|

||||

|

||||

@@ -25,6 +25,9 @@ const user = {

|

||||

const userInfoStr = window.localStorage.getItem('user_info')

|

||||

if (!userInfoStr) return {}

|

||||

return JSON.parse(userInfoStr)

|

||||

},

|

||||

token () {

|

||||

return window.localStorage.getItem('token')

|

||||

}

|

||||

},

|

||||

|

||||

|

||||

@@ -114,7 +114,7 @@

|

||||

<el-button type="primary" icon="el-icon-search" size="mini" @click="onView(scope.row)"></el-button>

|

||||

</el-tooltip>

|

||||

<el-tooltip :content="$t('Remove')" placement="top">

|

||||

<el-button type="danger" icon="el-icon-delete" size="mini" @click="onRemove(scope.row)"></el-button>

|

||||

<el-button v-if="scope.row.status !== 'online'" type="danger" icon="el-icon-delete" size="mini" @click="onRemove(scope.row)"></el-button>

|

||||

</el-tooltip>

|

||||

</template>

|

||||

</el-table-column>

|

||||

|

||||

@@ -84,7 +84,9 @@

|

||||

<el-form :model="spiderForm" ref="addConfigurableForm" inline-message>

|

||||

<el-form-item :label="$t('Upload Zip File')" label-width="120px" name="site">

|

||||

<el-upload

|

||||

:action="$request.baseUrl + '/spiders/manage/upload'"

|

||||

:action="$request.baseUrl + '/spiders'"

|

||||

:headers="{Authorization:token}"

|

||||

:on-change="onUploadChange"

|

||||

:on-success="onUploadSuccess"

|

||||

:file-list="fileList">

|

||||

<el-button type="primary" icon="el-icon-upload">{{$t('Upload')}}</el-button>

|

||||

@@ -229,7 +231,8 @@

|

||||

|

||||

<script>

|

||||

import {

|

||||

mapState

|

||||

mapState,

|

||||

mapGetters

|

||||

} from 'vuex'

|

||||

import dayjs from 'dayjs'

|

||||

import CrawlConfirmDialog from '../../components/Common/CrawlConfirmDialog'

|

||||

@@ -258,11 +261,13 @@ export default {

|

||||

// tableData,

|

||||

columns: [

|

||||

{ name: 'name', label: 'Name', width: '180', align: 'left' },

|

||||

{ name: 'site_name', label: 'Site', width: '140', align: 'left' },

|

||||

// { name: 'site_name', label: 'Site', width: '140', align: 'left' },

|

||||

{ name: 'type', label: 'Spider Type', width: '120' },

|

||||

// { name: 'cmd', label: 'Command Line', width: '200' },

|

||||

// { name: 'lang', label: 'Language', width: '120', sortable: true },

|

||||

{ name: 'last_run_ts', label: 'Last Run', width: '160' }

|

||||

{ name: 'last_run_ts', label: 'Last Run', width: '160' },

|

||||

{ name: 'create_ts', label: 'Create Time', width: '160' },

|

||||

{ name: 'update_ts', label: 'Update Time', width: '160' }

|

||||

// { name: 'last_7d_tasks', label: 'Last 7-Day Tasks', width: '80' },

|

||||

// { name: 'last_5_errors', label: 'Last 5-Run Errors', width: '80' }

|

||||

],

|

||||

@@ -278,6 +283,9 @@ export default {

|

||||

'spiderList',

|

||||

'spiderForm'

|

||||

]),

|

||||

...mapGetters('user', [

|

||||

'token'

|

||||

]),

|

||||

filteredTableData () {

|

||||

return this.spiderList

|

||||

.filter(d => {

|

||||

@@ -469,6 +477,8 @@ export default {

|

||||

}

|

||||

})

|

||||

},

|

||||

onUploadChange () {

|

||||

},

|

||||

onUploadSuccess () {

|

||||

// clear fileList

|

||||

this.fileList = []

|

||||

|

||||

Reference in New Issue

Block a user